WorldStereo: Bridging Camera-Guided Video Generation and Scene Reconstruction via 3D Geometric Memories

Yisu Zhang1,2* Chenjie Cao2* Tengfei Wang2† Xuhui Zuo2 Junta Wu2 Jianke Zhu1‡ Chunchao Guo2

1Zhejiang University

2Tencent Hunyuan

*Equal Contribution †Project Lead ‡Corresponding Author

📅 News

[2026.02]🎉 WorldStereo is accepted by CVPR 2026![2026.03]📄 Paper is now available on arXiv: https://arxiv.org/abs/2602.24233[2026.04]🚀 Code and model weights of WorldStereo 2.0 are now released![2026.04]🚀 HY-World 2.0 are now released: https://github.com/Tencent-Hunyuan/HY-World-2.0 !

✅ TODO List

- Release inference code and model weights of WorldStereo 2.0

- Release data pre-processing pipelines for panoramic and multi-trajectory scenes

📖 Abstract

We propose WorldStereo, a novel framework that bridges camera-guided video generation and 3D reconstruction via two dedicated geometric memory modules:

- Global-Geometric Memory (GGM) enables precise camera control while injecting coarse structural priors through incrementally updated point clouds via a ControlNet branch.

- Spatial-Stereo Memory (SSM) constrains the model's attention receptive fields with 3D correspondences to focus on fine-grained details from the memory bank.

Together, these components enable WorldStereo to generate multi-view-consistent videos under precise camera control, facilitating high-quality 3D reconstruction. Furthermore, WorldStereo shows impressive efficiency by leveraging a distribution-matching distilled (DMD) VDM backbone without joint training.

🎬 Results

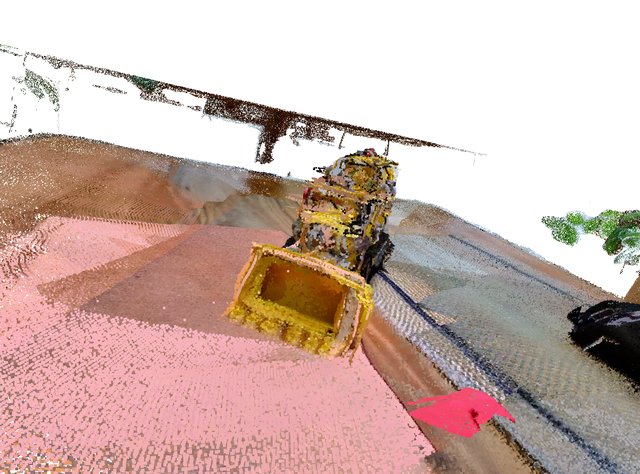

3D Reconstruction from a Single Image

Given a single reference image, WorldStereo generates multi-view consistent videos and reconstructs a dense 3D point cloud. Below are example results on two scenes.

Scene: Kitchen | Input image → Point cloud (5 views)

Camera Control

| Methods | Camera Metrics | Visual Quality | |||||

|---|---|---|---|---|---|---|---|

| RotErr ↓ | TransErr ↓ | ATE ↓ | Q-Align ↑ | CLIP-IQA+ ↑ | Laion-Aes ↑ | CLIP-I ↑ | |

| SEVA | 1.690 | 1.578 | 2.879 | 3.232 | 0.479 | 4.623 | 77.16 |

| Gen3C | 0.944 | 1.580 | 2.789 | 3.353 | 0.489 | 4.863 | 82.33 |

| WorldStereo | 0.762 | 1.245 | 2.141 | 4.149 | 0.547 | 5.257 | 89.05 |

| WorldStereo 2.0 | 0.492 | 0.968 | 1.768 | 4.205 | 0.544 | 5.266 | 89.43 |

Single-View-Generated Reconstruction

| Methods | Tanks-and-Temples | MipNeRF360 | ||||||

|---|---|---|---|---|---|---|---|---|

| Precision ↑ | Recall ↑ | F1-Score ↑ | AUC ↑ | Precision ↑ | Recall ↑ | F1-Score ↑ | AUC ↑ | |

| SEVA | 33.59 | 35.34 | 36.73 | 51.03 | 22.38 | 55.63 | 28.75 | 46.81 |

| Gen3C | 46.73 | 25.51 | 31.24 | 42.44 | 23.28 | 75.37 | 35.26 | 52.10 |

| Lyra | 50.38 | 28.67 | 32.54 | 43.05 | 30.02 | 58.60 | 36.05 | 49.89 |

| FlashWorld | 26.58 | 20.72 | 22.29 | 30.45 | 35.97 | 53.77 | 42.60 | 53.86 |

| WorldStereo 2.0 | 43.62 | 41.02 | 41.43 | 58.19 | 43.19 | 65.32 | 51.27 | 65.79 |

| WorldStereo 2.0 (DMD) | 40.41 | 44.41 | 43.16 | 60.09 | 42.34 | 64.83 | 50.52 | 65.64 |

🆕 WorldStereo 2.0 vs. 1.0

WorldStereo 2.0 introduces four key improvements over the original version:

| WorldStereo 1.0 | WorldStereo 2.0 | |

|---|---|---|

| Latent Space | Standard video latent space | Keyframe latent space — encodes each frame independently, substantially improving visual quality of generated novel views and completely supporting parallel encoding/decoding |

| Memory Mechanism | Cross-attention to retrieved reference frames | Stereo stitching in the main branch — reference views are spatially concatenated with target frames along the width dimension in the main DiT branch, enabling stronger and more direct memory fusion |

| Backbone Fine-tuning | Frozen backbone | Partial backbone fine-tuning — backbone weights are selectively updated to adapt to the keyframe latent space and improve overall generation quality |

| Training Data | Limited camera trajectories | Expanded UE rendering data — significantly more Unreal Engine rendered scenes with diverse and precise camera motions, leading to stronger camera control and memory capabilities |

More details of WorldStereo 2.0 are shown in HY-World 2.0.

⚙️ Installation

1. Clone the repository:

git clone https://github.com/FuchengSu/WorldStereo.git cd WorldStereo

2. Install core dependencies:

conda create -n worldstereo python=3.11 conda activate worldstereo pip install -r requirements.txt

3. Install PyTorch3D (required for point cloud rendering):

pip install --no-build-isolation "git+https://github.com/facebookresearch/pytorch3d.git@stable"

4. Install MoGe (monocular depth estimation):

pip install git+https://github.com/microsoft/MoGe.git@0286b495230a074aadf1c76cc5c679e943e5d1c6

5. (Optional) Install third-party reconstruction module for WorldMirror reconstruction:

mkdir third_party cd third_party git clone https://github.com/Tencent-Hunyuan/HY-World-2.0.git pip install -r HY-World-2.0/requirements.txt

Note:

third_party/HY-World-2.0is required only forapply_worldmirrorpost-processing (multi-view depth consistency and Gaussian Splatting reconstruction). You can skip it for basic video generation.

🚀 Quick Start

Model Variants

WorldStereo ships three model variants, each suited to a different use case:

| Model Type | Entry Point | Description |

|---|---|---|

worldstereo-camera | run_camera_control.py | Camera control only; single-view input |

worldstereo-memory | run_camera_control.py / run_multi_traj.py | Full model with GGM + SSM; multi-view consistent generation; best quality |

worldstereo-memory-dmd | run_camera_control.py / run_multi_traj.py | DMD distillation variant; 4-step inference, fastest |

Models are automatically downloaded from HuggingFace Hub (hanshanxue/WorldStereo) on first run.

Single-View Camera Control

Generate a video from a single image along a specified camera trajectory:

python run_camera_control.py \ --model_type worldstereo-camera \ --input_path examples/images \ --output_path outputs \ --seed 1024

Multi-GPU Inference (Sequence Parallel)

Scale to multiple GPUs using Sequence Parallelism (SP) and FSDP:

torchrun --nproc_per_node=8 run_camera_control.py \ --model_type worldstereo-memory \ --input_path examples/panorama \ --output_path outputs \ --fsdp

Multi-Trajectory Inference (Panorama / Reconstruction)

For panoramic scene generation or 3D reconstruction from multiple trajectories:

# Panoramic scene generation torchrun --nproc_per_node=8 run_multi_traj.py \ --model_type worldstereo-memory \ --task_type panorama \ --input_path examples/panorama \ --output_path outputs \ --fsdp # Panoramic scene generation (DMD fast variant) torchrun --nproc_per_node=8 run_multi_traj.py \ --model_type worldstereo-memory-dmd \ --task_type panorama \ --input_path examples/panorama \ --output_path outputs \ --fsdp # 3D scene reconstruction torchrun --nproc_per_node=8 run_multi_traj.py \ --model_type worldstereo-memory \ --task_type reconstruction \ --input_path examples/reconstruction \ --output_path outputs \ --fsdp # 3D scene reconstruction (DMD fast variant) torchrun --nproc_per_node=8 run_multi_traj.py \ --model_type worldstereo-memory-dmd \ --task_type reconstruction \ --input_path examples/reconstruction \ --output_path outputs \ --fsdp

WorldMirror 3D Reconstruction (Optional)

After running run_multi_traj.py, the memory bank is automatically exported to a WorldMirror-compatible format under <output_path>/<scene>/world_mirror_data/<model_type>/. You can then run feedforward 3D reconstruction with HY-World 2.0:

# Requires: pip install -r third_party/HY-World-2.0/requirements.txt cd third_party/HY-World-2.0 torchrun --nproc_per_node=8 -m hyworld2.worldrecon.pipeline --input_path ../../outputs/<scene>/world_mirror_data/<model_type>/images \ --prior_cam_path ../../outputs/<scene>/world_mirror_data/<model_type>/cameras.json \ --strict_output_path ../../outputs/<scene>/world_mirror_data/<model_type>/results \ --target_size 832 --use_fsdp --enable_bf16 --no_save_normal --no_save_gs --no_sky_mask \ --apply_edge_mask --apply_confidence_mask --confidence_percentile 15.0 --compress_pts --no_interactive \ --disable_heads gs points

This produces metric-scale depth, surface normals, camera poses, a dense point cloud (.ply), and optionally Gaussian Splat renderings from the generated multi-view frames.

Python API

import torch from models.worldstereo_wrapper import WorldStereo device = torch.device("cuda:0") worldstereo = WorldStereo.from_pretrained( "hanshanxue/WorldStereo", subfolder="worldstereo-memory", # or "worldstereo-camera" / "worldstereo-memory-dmd" sp_world_size=1, fsdp=False, device=device, ) output = worldstereo(**pipeline_inputs)

CLI Reference

run_camera_control.py

| Flag | Default | Description |

|---|---|---|

--model_type | worldstereo-camera | Model variant to use |

--input_path | examples/images | Input scene directory |

--output_path | outputs | Output directory |

--local_files_only | False | Use locally cached weights instead of downloading |

--fsdp | False | Enable FSDP model sharding |

--seed | 1024 | Random seed |

run_multi_traj.py (additional flags)

| Flag | Default | Description |

|---|---|---|

--task_type | panorama | panorama or reconstruction |

--align_nframe | 8 | Frames per clip saved for updating the memory bank |

📂 Input Data Format

Camera-Only Inference (examples/images/)

<scene>/

├── image.png # reference image

├── prompt.json # text descriptions at three verbosity levels

│ # {"short caption": ..., "medium caption": ..., "long caption": ...}

└── camera.json # camera trajectory

# {"motion_list": [...], "extrinsic": [...], "intrinsic": [...]}

Memory-Augmented Multi-Trajectory (examples/panorama/, examples/reconstruction/)

<scene>/

├── panorama.png # (optional) full panorama — triggers VLM single-path inference

├── meta_info.json # {"scene_type": "perspective" | "panorama"}

├── start_frame.png # reference start image for depth initialization

└── render_results/

└── <view_id>/

└── <traj_id>/

├── render.mp4 # pre-rendered geometry video (point cloud warp)

├── render_mask.mp4 # binary occlusion mask video

└── camera.json # {"extrinsic": [...], "intrinsic": [...]}

🔧 Architecture

Model Variants

WorldStereo defines two transformer architectures in models/worldstereo.py, both extending WanTransformer3DModel from diffusers:

WorldStereoModel— Wan DiT backbone + ControlNet. Used byworldstereo-camera. The ControlNet encodes rendered point cloud geometry and camera embeddings, injecting residuals at each transformer block.WorldStereoRefSModel— ExtendsWorldStereoModelwithWanTransformerSparseSpatialBlocklayers. These SSM blocks perform sparse attention over retrieved reference frames, guided by 3D correspondences. Used byworldstereo-memoryandworldstereo-memory-dmd.

Inference Pipelines

Three pipelines are provided under models/pipelines/, selected automatically based on model_type in the config:

| Pipeline | Class | Mode |

|---|---|---|

pipeline_pcd_keyframe.py | KFPCDControllerPipeline | Camera; standard DDIM sampling |

pipeline_ref_keyframe.py | KFPCDControllerRefPipeline | Camera + GGM + SSM; standard DDIM sampling |

pipeline_dmd_keyframe.py | RefKFDMDGeneratorPipeline | Camera + GGM + SSM; 4-step DMD distillation |

3D Memory Bank

The memory bank (src/retrieval_wm.py) manages the growing 3D representation across trajectories:

- Init — MoGe depth estimation on the start frame lifts it to a point cloud.

- Retrieve — For each new target trajectory, the most relevant reference frames are selected via FOV-overlap scoring combined with DINOv2 image features and quality-aware furthest-point sampling.

- Update — After generation, new frames and their estimated depths are appended to the bank.

- Reconstruction — Feedforward reconstruction via HY-World 2.0 WorldMirror enforces multi-view depth consistency; final global alignment produces a unified point cloud.

Distributed Inference

WorldStereo supports two parallelism strategies:

- Sequence Parallel (SP) — The sequence dimension is sharded across the SP group at each attention layer (

models/attention.py). Controlled bytorchrun --nproc_per_node. - FSDP — Full-Sharded Data Parallel wraps both the transformer and auxiliary encoders. Enabled with

--fsdp. Requires adevice_meshwith("rep", "shard")dimensions.

🤝 Acknowledgements

WorldStereo builds upon the following excellent works:

- Wan — Video DiT backbone

- HunyuanVideo-1.5 — Components of sequence parallel and video generation model

- MoGe — Monocular geometry estimation

- HY-World 2.0 — WorldMirror reconstruction module

- diffusers — Pipeline and model utilities

📝 Citation

If you find WorldStereo useful in your research, please cite:

@article{zhang2026worldstereo, title={WorldStereo: Bridging Camera-Guided Video Generation and Scene Reconstruction via 3D Geometric Memories}, author={Zhang, Yisu and Cao, Chenjie and Wang, Tengfei and Zuo, Xuhui and Wu, Junta and Zhu, Jianke and Guo, Chunchao}, journal={arXiv preprint arXiv:2603.02049}, year={2026} }