oh — OpenHarness: Open Agent Harness

OpenHarness delivers core lightweight agent infrastructure: tool-use, skills, memory, and multi-agent coordination.

Join the community: contribute Harness for open agent development.

One Command (oh) to Launch OpenHarness and Unlock All Agent Harnesses.

Supports CLI agent integration including OpenClaw, nanobot, Cursor, and more.

✨ OpenHarness's Key Harness Features

🔄 Agent Loop

• Streaming Tool-Call Cycle • API Retry with Exponential Backoff • Parallel Tool Execution • Token Counting & Cost Tracking |

🔧 Harness Toolkit

• 43 Tools (File, Shell, Search, Web, MCP) • On-Demand Skill Loading (.md) • Plugin Ecosystem (Skills + Hooks + Agents) • Compatible with anthropics/skills & plugins |

🧠 Context & Memory

• CLAUDE.md Discovery & Injection • Context Compression (Auto-Compact) • MEMORY.md Persistent Memory • Session Resume & History |

🛡️ Governance

• Multi-Level Permission Modes • Path-Level & Command Rules • PreToolUse / PostToolUse Hooks • Interactive Approval Dialogs |

🤝 Swarm Coordination

• Subagent Spawning & Delegation • Team Registry & Task Management • Background Task Lifecycle • ClawTeam Integration (Roadmap) |

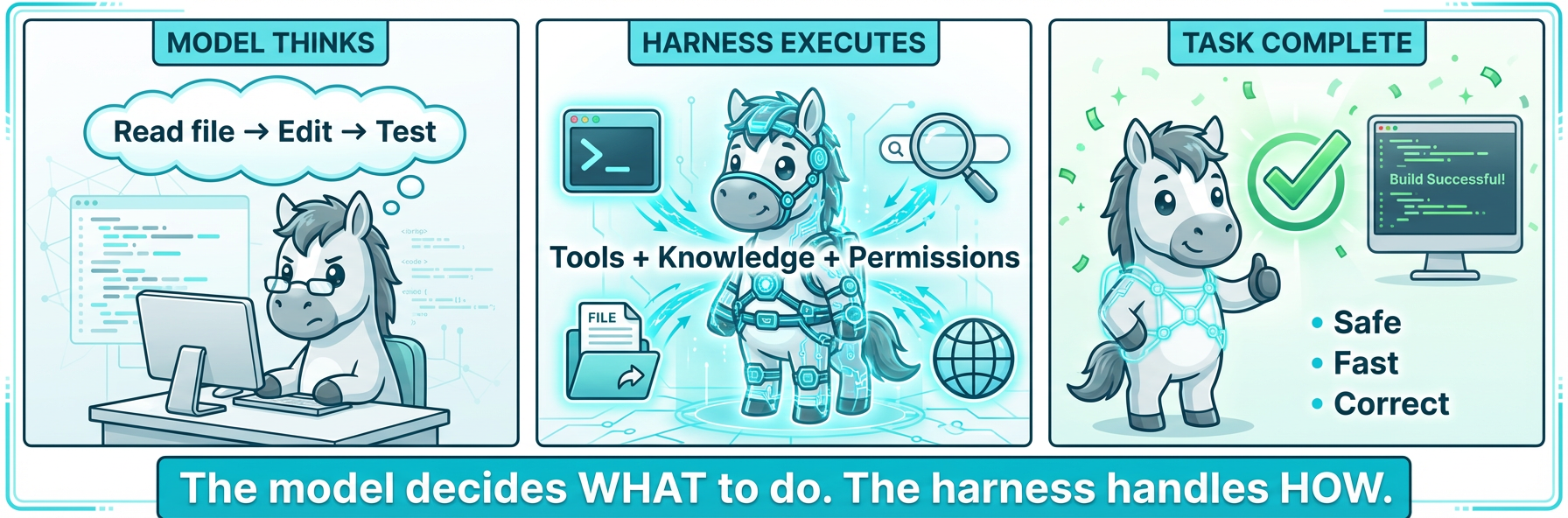

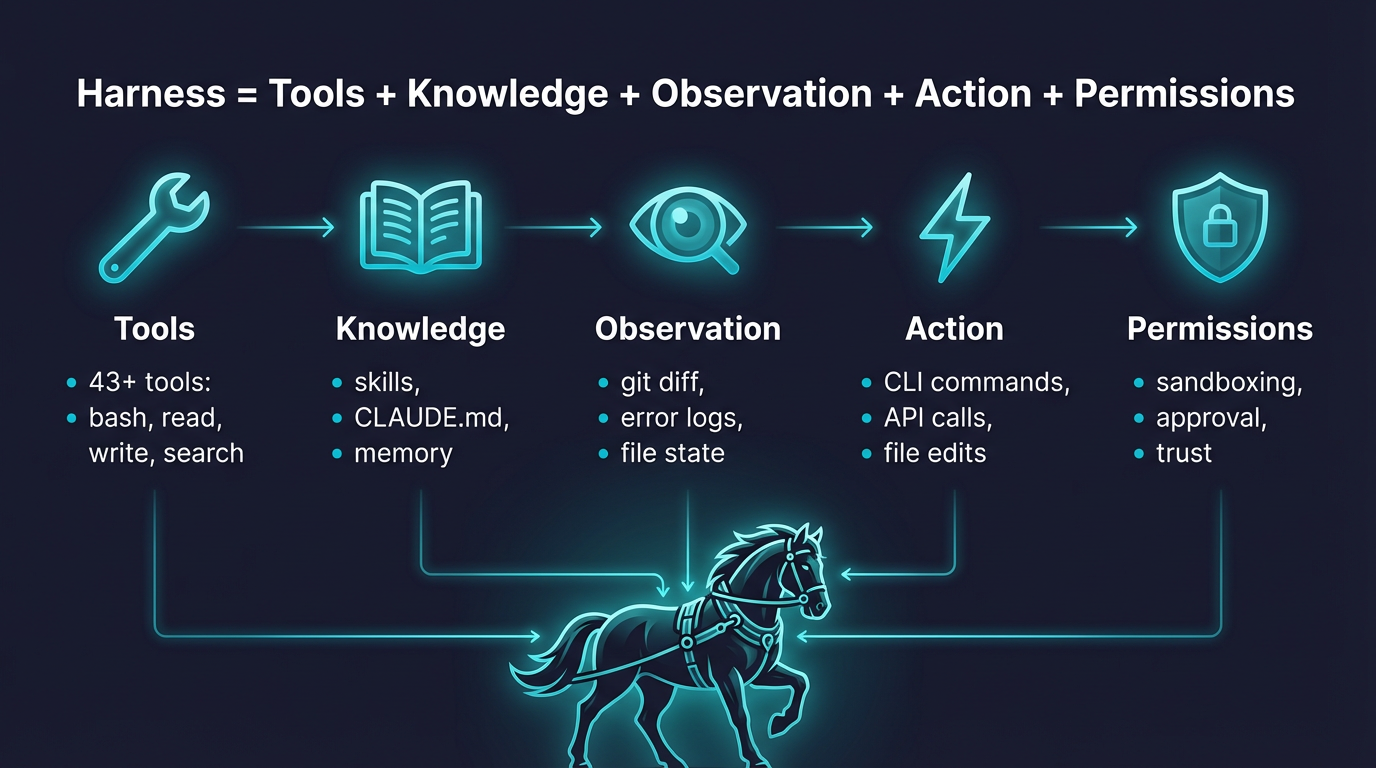

🤔 What is an Agent Harness?

An Agent Harness is the complete infrastructure that wraps around an LLM to make it a functional agent. The model provides intelligence; the harness provides hands, eyes, memory, and safety boundaries.

OpenHarness is an open-source Python implementation designed for researchers, builders, and the community:

- Understand how production AI agents work under the hood

- Experiment with cutting-edge tools, skills, and agent coordination patterns

- Extend the harness with custom plugins, providers, and domain knowledge

- Build specialized agents on top of proven architecture

📰 What's New

- 2026-04-01 🎨 v0.1.0 — Initial OpenHarness open-source release featuring complete Harness architecture:

Start here: Quick Start · Provider Compatibility · Showcase · Contributing · Changelog

🚀 Quick Start

One-Click Install

The fastest way to get started — a single command handles OS detection, dependency checks, and installation:

curl -fsSL https://raw.githubusercontent.com/HKUDS/OpenHarness/main/scripts/install.sh | bash

Options:

| Flag | Description |

|---|---|

--from-source | Clone from GitHub and install in editable mode (pip install -e .) |

--with-channels | Also install IM channel dependencies (slack-sdk, python-telegram-bot, discord.py) |

# Install from source (for contributors / latest code) curl -fsSL https://raw.githubusercontent.com/HKUDS/OpenHarness/main/scripts/install.sh | bash -s -- --from-source # Install with IM channel support curl -fsSL https://raw.githubusercontent.com/HKUDS/OpenHarness/main/scripts/install.sh | bash -s -- --with-channels # Or run locally after cloning bash scripts/install.sh --from-source --with-channels

The script will:

- Detect your OS (Linux / macOS / WSL)

- Verify Python ≥ 3.10 and Node.js ≥ 18

- Install OpenHarness via

pip - Set up the React TUI (

npm install) if Node.js is available - Create

~/.openharness/config directory - Confirm with

oh --version

Prerequisites

- Python 3.10+ and uv

- Node.js 18+ (optional, for the React terminal UI)

- An LLM API key

One-Command Demo

ANTHROPIC_API_KEY=your_key uv run oh -p "Inspect this repository and list the top 3 refactors"

Install & Run

# Clone and install git clone https://github.com/HKUDS/OpenHarness.git cd OpenHarness uv sync --extra dev # Example: use Kimi as the backend export ANTHROPIC_BASE_URL=https://api.moonshot.cn/anthropic export ANTHROPIC_API_KEY=your_kimi_api_key export ANTHROPIC_MODEL=kimi-k2.5 # Launch oh # if venv is activated uv run oh # without activating venv

Non-Interactive Mode (Pipes & Scripts)

# Single prompt → stdout oh -p "Explain this codebase" # JSON output for programmatic use oh -p "List all functions in main.py" --output-format json # Stream JSON events in real-time oh -p "Fix the bug" --output-format stream-json

🔌 Provider Compatibility

OpenHarness supports three API formats: Anthropic (default), OpenAI-compatible (--api-format openai), and GitHub Copilot (--api-format copilot). The OpenAI format covers a wide range of providers.

Anthropic Format (default)

| Provider profile | Detection signal | Notes |

|---|---|---|

| Anthropic | Default when no custom ANTHROPIC_BASE_URL is set | Default Claude-oriented setup |

| Moonshot / Kimi | ANTHROPIC_BASE_URL contains moonshot or model starts with kimi | Anthropic-compatible endpoint |

| Vertex-compatible | Base URL contains vertex or aiplatform | Anthropic-style gateways on Vertex |

| Bedrock-compatible | Base URL contains bedrock | Bedrock-style deployments |

| Generic Anthropic-compatible | Any other explicit ANTHROPIC_BASE_URL | Proxies and internal gateways |

OpenAI Format (--api-format openai)

Any provider implementing the OpenAI /v1/chat/completions API works out of the box:

| Provider | Base URL | Example models |

|---|---|---|

| Alibaba DashScope | https://dashscope.aliyuncs.com/compatible-mode/v1 | qwen3.5-flash, qwen3-max, deepseek-r1 |

| DeepSeek | https://api.deepseek.com | deepseek-chat, deepseek-reasoner |

| OpenAI | https://api.openai.com/v1 | gpt-4o, gpt-4o-mini |

| GitHub Models | https://models.inference.ai.azure.com | gpt-4o, Meta-Llama-3.1-405B-Instruct |

| SiliconFlow | https://api.siliconflow.cn/v1 | deepseek-ai/DeepSeek-V3 |

| Groq | https://api.groq.com/openai/v1 | llama-3.3-70b-versatile |

| Ollama (local) | http://localhost:11434/v1 | Any local model |

# Example: use DashScope uv run oh --api-format openai \ --base-url "https://dashscope.aliyuncs.com/compatible-mode/v1" \ --api-key "sk-xxx" \ --model "qwen3.5-flash" # Or via environment variables export OPENHARNESS_API_FORMAT=openai export OPENAI_API_KEY=sk-xxx export OPENHARNESS_BASE_URL=https://dashscope.aliyuncs.com/compatible-mode/v1 export OPENHARNESS_MODEL=qwen3.5-flash uv run oh

GitHub Copilot Format (--api-format copilot)

Use your existing GitHub Copilot subscription as the LLM backend. Authentication uses GitHub's OAuth device flow — no API keys needed.

# One-time login (opens browser for GitHub authorization) oh auth copilot-login # Then launch with Copilot as the provider uv run oh --api-format copilot # Or via environment variable export OPENHARNESS_API_FORMAT=copilot uv run oh # Check auth status oh auth status # Remove stored credentials oh auth copilot-logout

| Feature | Details |

|---|---|

| Auth method | GitHub OAuth device flow (no API key needed) |

| Token management | Automatic refresh of short-lived session tokens |

| Enterprise | Supports GitHub Enterprise via --github-domain flag |

| Models | Uses Copilot's default model selection |

| API | OpenAI-compatible chat completions under the hood |

🏗️ Harness Architecture

OpenHarness implements the core Agent Harness pattern with 10 subsystems:

openharness/

engine/ # 🧠 Agent Loop — query → stream → tool-call → loop

tools/ # 🔧 43 Tools — file I/O, shell, search, web, MCP

skills/ # 📚 Knowledge — on-demand skill loading (.md files)

plugins/ # 🔌 Extensions — commands, hooks, agents, MCP servers

permissions/ # 🛡️ Safety — multi-level modes, path rules, command deny

hooks/ # ⚡ Lifecycle — PreToolUse/PostToolUse event hooks

commands/ # 💬 54 Commands — /help, /commit, /plan, /resume, ...

mcp/ # 🌐 MCP — Model Context Protocol client

memory/ # 🧠 Memory — persistent cross-session knowledge

tasks/ # 📋 Tasks — background task management

coordinator/ # 🤝 Multi-Agent — subagent spawning, team coordination

prompts/ # 📝 Context — system prompt assembly, CLAUDE.md, skills

config/ # ⚙️ Settings — multi-layer config, migrations

ui/ # 🖥️ React TUI — backend protocol + frontend

The Agent Loop

The heart of the harness. One loop, endlessly composable:

while True: response = await api.stream(messages, tools) if response.stop_reason != "tool_use": break # Model is done for tool_call in response.tool_uses: # Permission check → Hook → Execute → Hook → Result result = await harness.execute_tool(tool_call) messages.append(tool_results) # Loop continues — model sees results, decides next action

The model decides what to do. The harness handles how — safely, efficiently, with full observability.

Harness Flow

flowchart LR U[User Prompt] --> C[CLI or React TUI] C --> R[RuntimeBundle] R --> Q[QueryEngine] Q --> A[Anthropic-compatible API Client] A -->|tool_use| T[Tool Registry] T --> P[Permissions + Hooks] P --> X[Files Shell Web MCP Tasks] X --> Q

✨ Features

🔧 Tools (43+)

| Category | Tools | Description |

|---|---|---|

| File I/O | Bash, Read, Write, Edit, Glob, Grep | Core file operations with permission checks |

| Search | WebFetch, WebSearch, ToolSearch, LSP | Web and code search capabilities |

| Notebook | NotebookEdit | Jupyter notebook cell editing |

| Agent | Agent, SendMessage, TeamCreate/Delete | Subagent spawning and coordination |

| Task | TaskCreate/Get/List/Update/Stop/Output | Background task management |

| MCP | MCPTool, ListMcpResources, ReadMcpResource | Model Context Protocol integration |

| Mode | EnterPlanMode, ExitPlanMode, Worktree | Workflow mode switching |

| Schedule | CronCreate/List/Delete, RemoteTrigger | Scheduled and remote execution |

| Meta | Skill, Config, Brief, Sleep, AskUser | Knowledge loading, configuration, interaction |

Every tool has:

- Pydantic input validation — structured, type-safe inputs

- Self-describing JSON Schema — models understand tools automatically

- Permission integration — checked before every execution

- Hook support — PreToolUse/PostToolUse lifecycle events

📚 Skills System

Skills are on-demand knowledge — loaded only when the model needs them:

Available Skills:

- commit: Create clean, well-structured git commits

- review: Review code for bugs, security issues, and quality

- debug: Diagnose and fix bugs systematically

- plan: Design an implementation plan before coding

- test: Write and run tests for code

- simplify: Refactor code to be simpler and more maintainable

- pdf: PDF processing with pypdf (from anthropics/skills)

- xlsx: Excel operations (from anthropics/skills)

- ... 40+ more

Compatible with anthropics/skills — just copy .md files to ~/.openharness/skills/.

🔌 Plugin System

Compatible with claude-code plugins. Tested with 12 official plugins:

| Plugin | Type | What it does |

|---|---|---|

commit-commands | Commands | Git commit, push, PR workflows |

security-guidance | Hooks | Security warnings on file edits |

hookify | Commands + Agents | Create custom behavior hooks |

feature-dev | Commands | Feature development workflow |

code-review | Agents | Multi-agent PR review |

pr-review-toolkit | Agents | Specialized PR review agents |

# Manage plugins oh plugin list oh plugin install <source> oh plugin enable <name>

🤝 Ecosystem Workflows

OpenHarness is useful as a lightweight harness layer around Claude-style tooling conventions:

- OpenClaw-oriented workflows can reuse Markdown-first knowledge and command-driven collaboration patterns.

- Claude-style plugins and skills stay portable because OpenHarness keeps those formats familiar.

- ClawTeam-style multi-agent work maps well onto the built-in team, task, and background execution primitives.

For concrete usage ideas instead of generic claims, see docs/SHOWCASE.md.

🛡️ Permissions

Multi-level safety with fine-grained control:

| Mode | Behavior | Use Case |

|---|---|---|

| Default | Ask before write/execute | Daily development |

| Auto | Allow everything | Sandboxed environments |

| Plan Mode | Block all writes | Large refactors, review first |

Path-level rules in settings.json:

{ "permission": { "mode": "default", "path_rules": [{"pattern": "/etc/*", "allow": false}], "denied_commands": ["rm -rf /", "DROP TABLE *"] } }

🖥️ Terminal UI

React/Ink TUI with full interactive experience:

- Command picker: Type

/→ arrow keys to select → Enter - Permission dialog: Interactive y/n with tool details

- Mode switcher:

/permissions→ select from list - Session resume:

/resume→ pick from history - Animated spinner: Real-time feedback during tool execution

- Keyboard shortcuts: Shown at the bottom, context-aware

📡 CLI

oh [OPTIONS] COMMAND [ARGS]

Session: -c/--continue, -r/--resume, -n/--name

Model: -m/--model, --effort, --max-turns

Output: -p/--print, --output-format text|json|stream-json

Permissions: --permission-mode, --dangerously-skip-permissions

Context: -s/--system-prompt, --append-system-prompt, --settings

Advanced: -d/--debug, --mcp-config, --bare

Subcommands: oh mcp | oh plugin | oh auth

📊 Test Results

| Suite | Tests | Status |

|---|---|---|

| Unit + Integration | 114 | ✅ All passing |

| CLI Flags E2E | 6 | ✅ Real model calls |

| Harness Features E2E | 9 | ✅ Retry, skills, parallel, permissions |

| React TUI E2E | 3 | ✅ Welcome, conversation, status |

| TUI Interactions E2E | 4 | ✅ Commands, permissions, shortcuts |

| Real Skills + Plugins | 12 | ✅ anthropics/skills + claude-code/plugins |

# Run all tests uv run pytest -q # 114 unit/integration python scripts/test_harness_features.py # Harness E2E python scripts/test_real_skills_plugins.py # Real plugins E2E

🔧 Extending OpenHarness

Add a Custom Tool

from pydantic import BaseModel, Field from openharness.tools.base import BaseTool, ToolExecutionContext, ToolResult class MyToolInput(BaseModel): query: str = Field(description="Search query") class MyTool(BaseTool): name = "my_tool" description = "Does something useful" input_model = MyToolInput async def execute(self, arguments: MyToolInput, context: ToolExecutionContext) -> ToolResult: return ToolResult(output=f"Result for: {arguments.query}")

Add a Custom Skill

Create ~/.openharness/skills/my-skill.md:

--- name: my-skill description: Expert guidance for my specific domain --- # My Skill ## When to use Use when the user asks about [your domain]. ## Workflow 1. Step one 2. Step two ...

Add a Plugin

Create .openharness/plugins/my-plugin/.claude-plugin/plugin.json:

{ "name": "my-plugin", "version": "1.0.0", "description": "My custom plugin" }

Add commands in commands/*.md, hooks in hooks/hooks.json, agents in agents/*.md.

🌍 Showcase

OpenHarness is most useful when treated as a small, inspectable harness you can adapt to a real workflow:

- Repo coding assistant for reading code, patching files, and running checks locally.

- Headless scripting tool for

jsonandstream-jsonoutput in automation flows. - Plugin and skill testbed for experimenting with Claude-style extensions.

- Multi-agent prototype harness for task delegation and background execution.

- Provider comparison sandbox across Anthropic-compatible backends.

See docs/SHOWCASE.md for short, reproducible examples.

🤝 Contributing

OpenHarness is a community-driven research project. We welcome contributions in:

| Area | Examples |

|---|---|

| Tools | New tool implementations for specific domains |

| Skills | Domain knowledge .md files (finance, science, DevOps...) |

| Plugins | Workflow plugins with commands, hooks, agents |

| Providers | Support for more LLM backends (OpenAI, Ollama, etc.) |

| Multi-Agent | Coordination protocols, team patterns |

| Testing | E2E scenarios, edge cases, benchmarks |

| Documentation | Architecture guides, tutorials, translations |

# Development setup git clone https://github.com/HKUDS/OpenHarness.git cd OpenHarness uv sync --extra dev uv run pytest -q # Verify everything works

Useful contributor entry points:

CONTRIBUTING.mdfor setup, checks, and PR expectationsCHANGELOG.mdfor user-visible changesdocs/SHOWCASE.mdfor real-world usage patterns worth documenting

📄 License

MIT — see LICENSE.

![]()

Oh my Harness!

The model is the agent. The code is the harness.

Thanks for visiting ✨ OpenHarness!