🔍 About | 🔨 Setup | 🚢 Pretrain | ⛵ Finetune | 🚀 Quick Start | 🔗 Citation

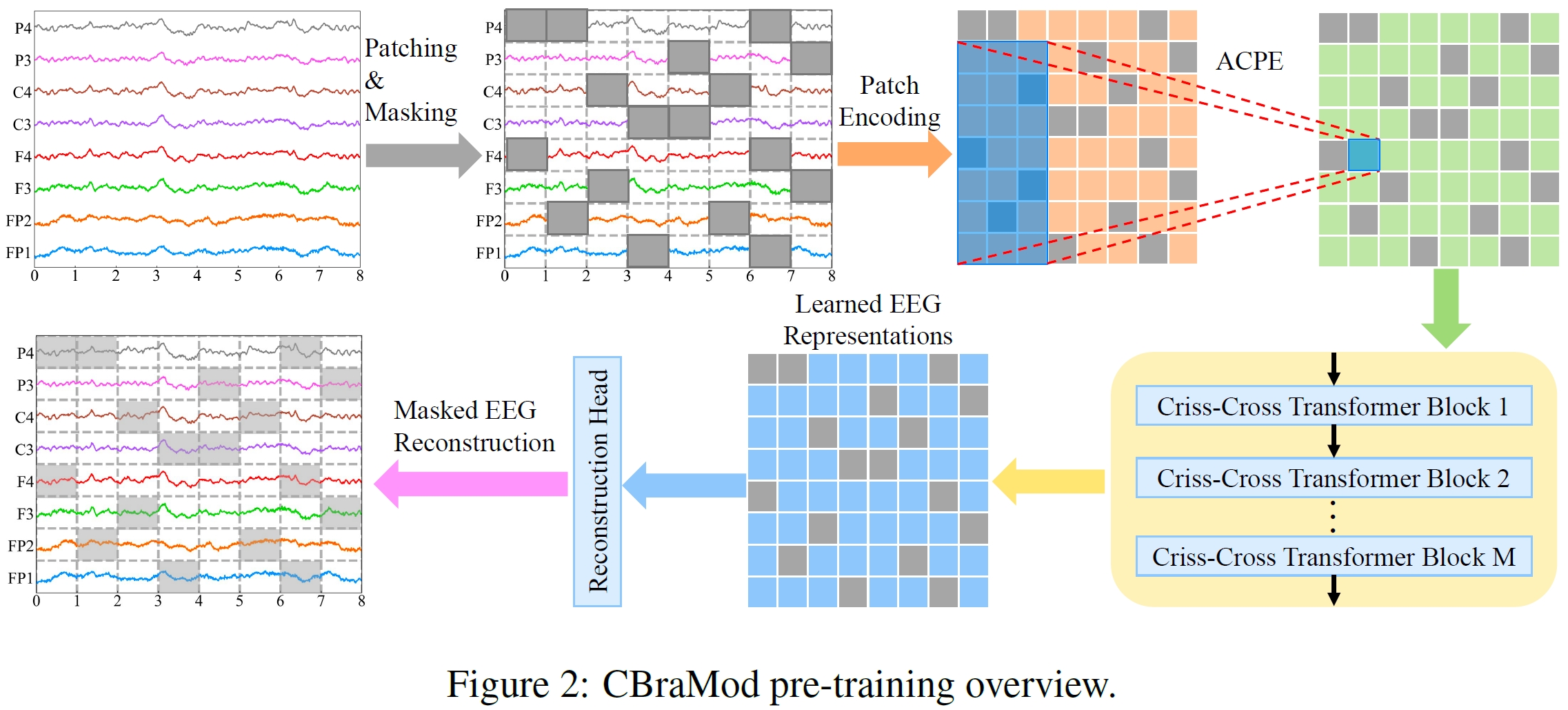

🔥 NEWS: Thanks to over 100 stars! We've further refined the code for improved stability. Appreciate your patience as we refine the implementation — ongoing EEG research continues to shape the development of a standardized pipeline.🔥 NEWS: The paper "CBraMod: A Criss-Cross Brain Foundation Model for EEG Decoding" has been accepted by ICLR 2025!

🔍 About

We propose CBraMod, a novel EEG foundation model, for EEG decoding on various clinical and BCI application. The preprint version of our paper is available at arXiv. The camera-ready version of the paper will be available at OpenReview.

🔨 Setup

Install Python.

Install PyTorch.

Install other requirements:

pip install -r requirements.txt

🚢 Pretrain

You can pretrain CBraMod on our pretraining dataset or your custom pretraining dataset using the following code:

python pretrain_main.py

We have released a pretrained checkpoint on Hugginface🤗.

⛵ Finetune

You can finetune CBraMod on our selected downstream datasets using the following code:

python finetune_main.py

🚀 Quick Start

You can fine-tune the pretrained CBraMod on your custom downstream dataset using the following example code:

import torch import torch.nn as nn from models.cbramod import CBraMod from einops.layers.torch import Rearrange device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu") model = CBraMod().to(device) model.load_state_dict(torch.load('pretrained_weights/pretrained_weights.pth', map_location=device)) model.proj_out = nn.Identity() classifier = nn.Sequential( Rearrange('b c s p -> b (c s p)'), nn.Linear(22*4*200, 4*200), nn.ELU(), nn.Dropout(0.1), nn.Linear(4 * 200, 200), nn.ELU(), nn.Dropout(0.1), nn.Linear(200, 4), ).to(device) # mock_eeg.shape = (batch_size, num_of_channels, time_segments, points_per_patch) mock_eeg = torch.randn((8, 22, 4, 200)).to(device) # logits.shape = (batch_size, num_of_classes) logits = classifier(model(mock_eeg))

🔗 Citation

If you're using this repository in your research or applications, please cite using the following BibTeX:

@inproceedings{wang2025cbramod, title={{CB}raMod: A Criss-Cross Brain Foundation Model for {EEG} Decoding}, author={Jiquan Wang and Sha Zhao and Zhiling Luo and Yangxuan Zhou and Haiteng Jiang and Shijian Li and Tao Li and Gang Pan}, booktitle={The Thirteenth International Conference on Learning Representations}, year={2025}, url={https://openreview.net/forum?id=NPNUHgHF2w} }